Project

AA5-2 (was EF1-15)

Robust Multilevel Training of Artificial Neural Networks

Project Heads

Michael Hintermüller, Carsten Gräser (until 12/2022)

Project Members

Qi Wang

Project Duration

01.02.2023 − 31.01.2026

Located at

WIAS

Description

Multilevel methods for training nonsmooth artificial neural networks has been developed, analyzed and implemented. Taylored refining and coarsening strategies for the optimization parameters in terms of number of neurons, layers and the network architecture has been studied. Efficient nonsmooth optimization methods is introduced and used to treat the level-specific problems. The framework could be applied to problems that have a multilevel structure, such as learned regularization in image processing, neural network-based PDE solvers, learning-informed physics, and so on. Software is developed and made publicly available.

The motivation of this work is to train neural networks (NNs) using a multilevel strategies, which could be interpreted as a supervised learning problem. We need to find the optimal parameters of the neural networks based on a given dataset. And here, a successful training implies the model has the capability to accurately predict unseen data, i.e. the data not contained in the dataset. And the objective function of our optimization problem could be written as a least squares problem subject to the parameters of NNs or the other loss function. To get the (approximated) optimal solution, the objective function is approximated by the regularised Taylor model at each iteration of the standard method, and the minimisation of the loss function represents the major cost per iteration of the methods, which crucially depends on the dimension of the problem. Even we just consider the neural networks with only one hidden layer with r nodes, there will be 3r+1 unknown totally, which means if we choose r is a bit large, then it will be quite expensive to minimise the objective function. Hence, we want to reduce this cost by exploiting the multilevel strategies. So we reduce the number of nodes in a neural network based on some coarsening standard, and solve the problem in a small dimension, then we prolongate it back. Start with one-hidden-layer neural network and extend it into deep neural network. On the other hand, we aim to use the multilevel strategies in width rather than in depth.

Furthermore, we also want to consider the sparsity of the neural network. Thus, we add the L1 penalty term of parameters of NN into the objective function, which gives us a non-smooth optimization problem. To build the framework completely, we are not only studying neural networks, but solving the nonsmooth optimization problem with multilevel trust region algorithm.

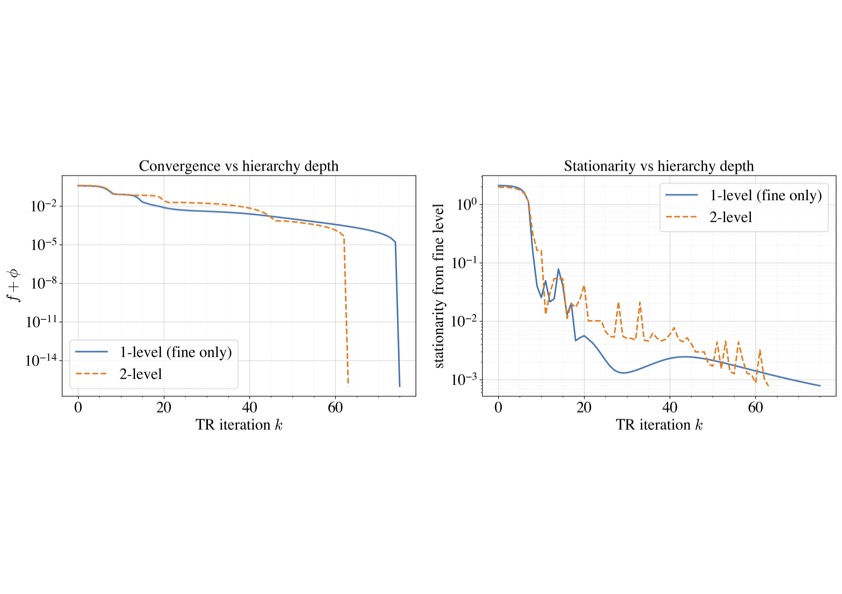

In this work, we developed a recursive trust-region algorithm for minimizing the sum of a smooth nonconvex function and a nonsmooth convex function in finite dimensional space. Our algorithm employs the proximal gradient step as a generalization of the Cauchy point, which allows us to prove the existence of a trial step and ultimately global convergence of the trust-region algorithm. The numerical experiments confirm that the proposed multilevel proximal trust-region (RMNTR) method is highly efficient and robust across various nonsmooth optimization problems, including PDE-constrained optimal control and physics-informed neural network (PINN) training. In the Burgers’ equation control problem, the multilevel algorithm produced results of comparable accuracy while achieving a clear computational advantage over the single-level approach. For the semilinear elliptic optimal control problem, the method maintained reliable convergence on increasingly fine meshes, demonstrating strong scalability and reduced overall computational effort. In the PINN example, the RMNTR method required far fewer training iterations and significantly less runtime, while achieving the same loss accuracy and stationarity as the baseline. Overall, these results indicate that incorporating a multilevel hierarchy into the proximal trust-region framework brings substantial performance benefits, effectively accelerating convergence without compromising solution quality, and making it a powerful approach for large-scale nonsmooth optimization in scientific machine learning and PDE-constrained applications.

Related Publications

R. Baraldi, M. Hintermüller, Q. Wang, A multilevel proximal trust-region method for nonsmooth optimization with applications, WIAS Preprint 3235 (2025)

Related Pictures